Overview

When I was in college, I did an internship with a food delivery start up. While exploring serverless tech, that wonderful internship experience came to my mind. Even though the system was quite simple and we didn’t face any issues at that time, I now realize how difficult it would have been to manage scaling if we had seen a sudden surge in the number of orders. Naturally, serverless seems a lucrative option here because of development ease, optimal cost, and worry less scaling. So I thought of doing a bit of retrospection by developing a food delivery system using serverless tech. I hope to share the best of my serverless learnings using this article as the medium.

Details

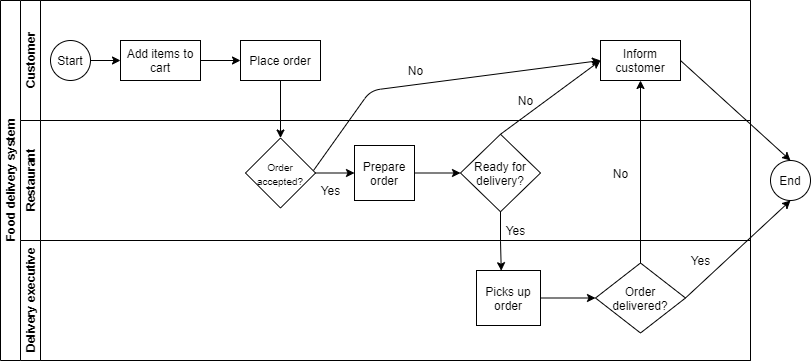

This project covers very basic functionalities of a food delivery platform. It’s main purpose is to manage orders placed by customers. You can find the source code here. A typical flow of such platform will look something like this:

- The customer opens up a list of restaurants.

- The customer adds food items to the cart from menu.

- The customer places the order.

- The restaurant accepts the order.

- A delivery executive picks up the order from the restaurant.

- The executive delivers the order to the customer.

Azure functions:

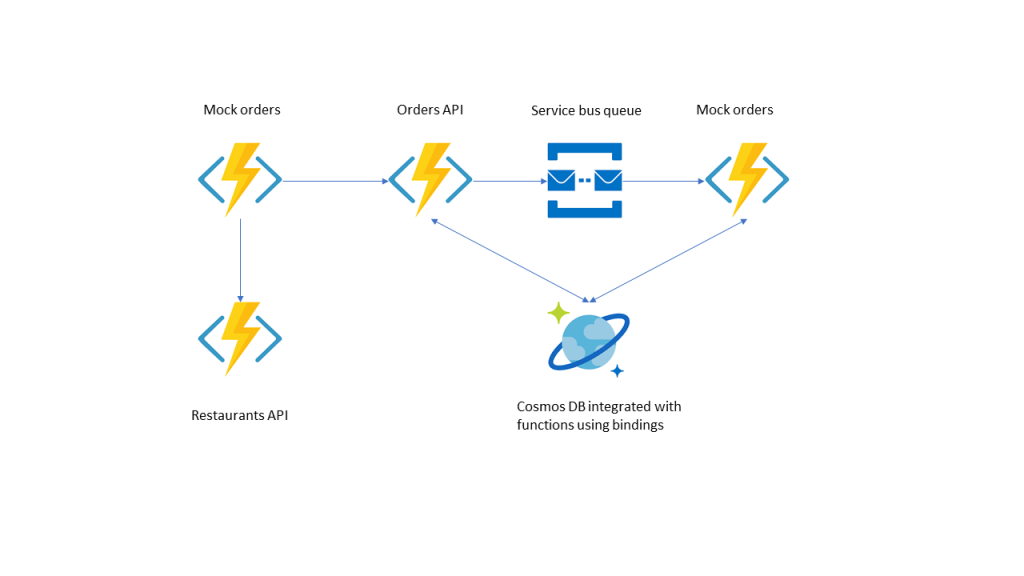

Each one of these steps is basically an API, which is HTTP triggered Azure functions in our case. The functions insert the order payload in a queue for further processing. We’ll have one function app to manage all these order related APIs.

As we also need to interact with Restaurants APIs to fetch the list of restaurants and their menu items, we can have a separate function app for restaurant related APIs.

I’ve also created a function app for testing purpose. This function app will mock order lifecycle by placing multiple orders every minute continuously throughout the day.

Azure durable functions:

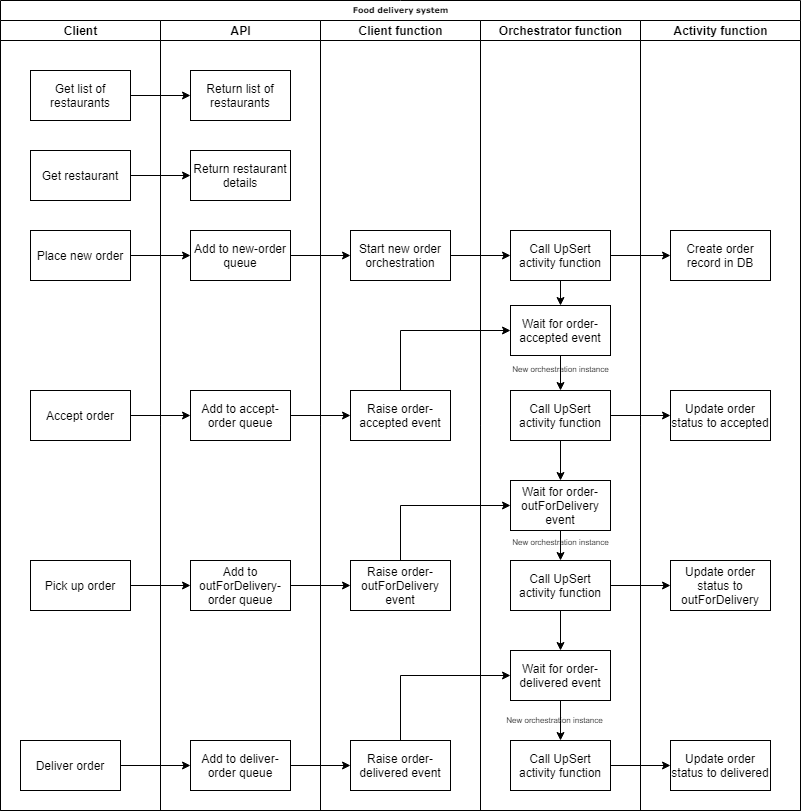

Once an order is passed to a queue, the order will be processed by a service bus queue triggered client function. These client functions will be responsible for starting an orchestration based on some trigger or for raising external events.

Orchestrator functions will be responsible for orchestrating activity functions and to wait for external events, like accept order or delivered order etc. One of those activity functions update order status using output binding with Azure Cosmos DB. We’ll have a separate function app for orchestrators as we want orchestration to scale independent of order APIs.

In almost any kind of delivery system, some kind of human interaction is expected. In case of food delivery, it can be a restaurant accepting an order, a delivery executive accepting a delivery, the delivery executive marking the order as delivered etc. All of these interactions must be performed within a fixed time. If the restaurant does not accept an order within let’s say a minute, then some kind of alert should be raised for the restaurant. If the order still isn’t accepted within next 5 minutes, then the order should be cancelled and the customer should be informed.

Durable functions offer a great way to manage human interaction in orchestrations with timer tasks. We can make use of human interaction pattern to raise external events for an orchestration when restaurant accepts the order. This will help us in monitoring if some external event has taken place or not without using any kind of polling. Orchestrator functions can wait for these external events and perform tasks when the event is received. If the event is not received within some specified time, then that case can be handled as well by writing the logic in some activity function.

Azure Service Bus Queues:

Azure Service Bus is a great service to manage advanced queuing scenarios. The HTTP triggered functions will push orders to these queues, which can be consumed by durable client functions. We can have different queues for each order status: order-new-queue, order-accepted-queue, order-outfordelivery-queue and order-delivered-queue.

Azure Cosmos DB:

Azure Cosmos DB can be used to store restaurant and order details. It’s low latency reads and writes can definitely help us in building a good platform. Azure functions’ Cosmos DB output binding can be used to update order status as a part of orchestration. I have used serverless tier of this service just to give it a try, and it has worked great for me in this project. Efficient provisioning of RU/s is a challenge, that’s where serverless tier comes in where we’ll be charged for total RU/s consumed.

The API functions will first need to get order from Cosmos DB, and then push the order message to their respective queues. This can be very well handled using Cosmos DB input binding in the functions. One of the functions look like this:

[FunctionName("OrderAccepted")]

public async Task<IActionResult> OrderAccepted([HttpTrigger(AuthorizationLevel.Anonymous, "get", Route = "orders/accepted/{orderId}")] HttpRequest req,

[ServiceBus("%OrderAcceptedQueue%", Connection = "ServiceBusConnection")]

IAsyncCollector<dynamic> serviceBusQueue,

[CosmosDB(

databaseName: "FoodDeliveryDB",

collectionName: "Orders",

ConnectionStringSetting = "CosmosDbConnectionString",

Id = "{orderId}",

PartitionKey = "{orderId}")] Order order,

ILogger log)

{

try

{

await AddToQueue(order, serviceBusQueue);

return new OkObjectResult(order);

}

catch (Exception ex)

{

log.LogError(ex.ToString());

return new InternalServerErrorResult();

}

}

It is as easy as that to integrate Cosmos DB with Azure functions! Saves a lot of time and efforts. Really!

Performance and cost

After deploying this solution to Azure, I monitored it for a few days and improved it gradually to make it as much performance and cost optimized as I can. This was my favorite part of this whole project as I got to learn A LOT from this, which I’ll tell more about in the next section. My goal was to handle maximum number of orders with minimum cost and latency, and of course, no failed orders. Please feel free to reach out to me if you think that I missed or misanalysed any point, still learning 🙂

- The load testing function places 60 orders every minute. That is, 3600 orders every hour, and 86400 orders every day. The load obviously can be increased or decreased, but I’ve based my analysis on this much load.

- Order cost (Total orders/Total cost) is INR 0.02 (~$0.00027). That is, cost of the whole cloud infrastructure to process one order is just INR 0.02 (Sounds lucrative enough for a small startup 😉 )

- Average server response time is around 27ms.

Learnings

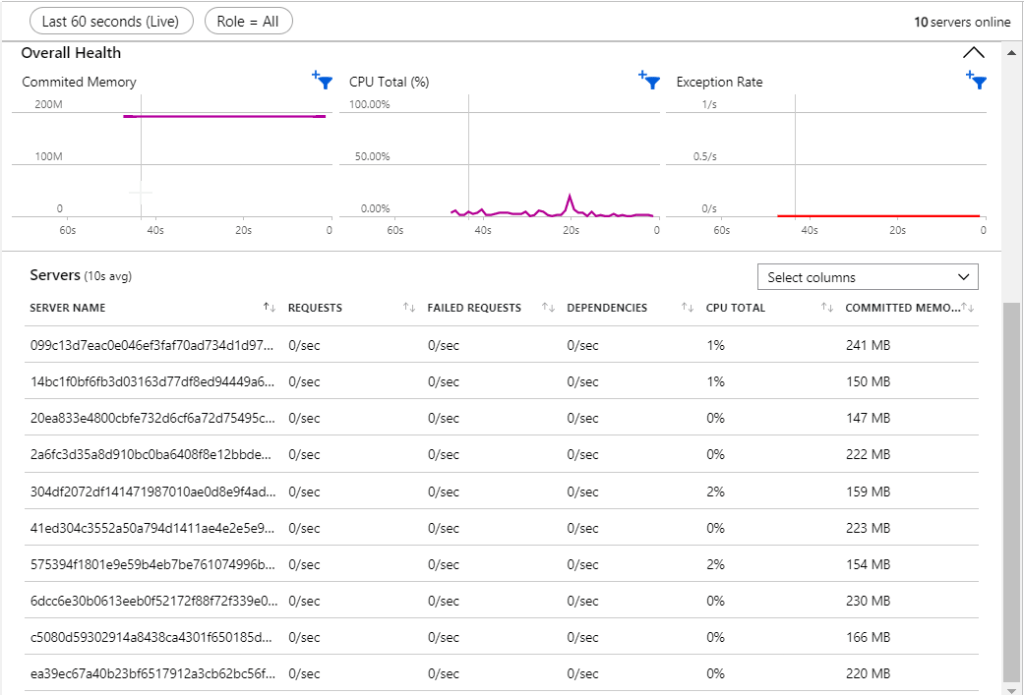

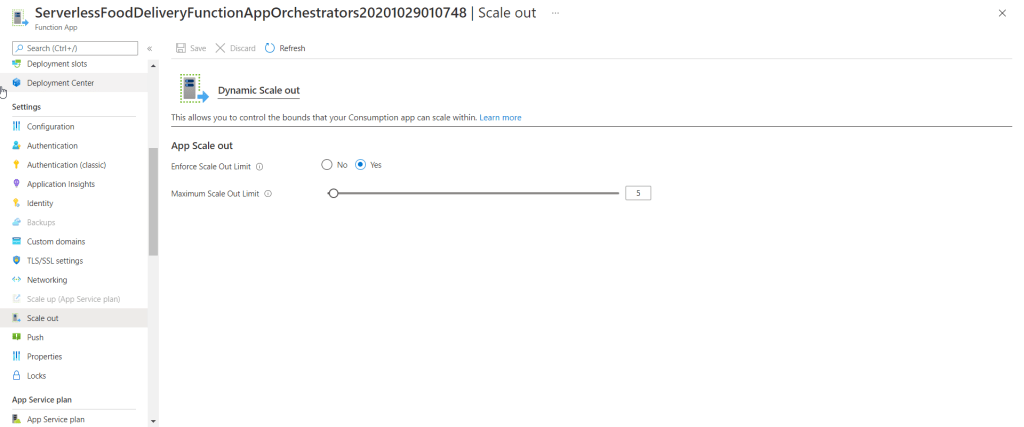

Enforcing maximum scale out limit: Earlier I was under the impression that I don’t really need to worry about Azure functions’ cost as I’m charged for execution seconds, and that the number of instances don’t matter. But while working on this project, I realized that’s not the case every time. Number of instances have no impact on functions cost as the charges are based on executions, but they do impact storage account costs. The reason for that is: each instance competes for blob lease. Higher number of instances means higher number of blob transactions, hence higher transaction costs.

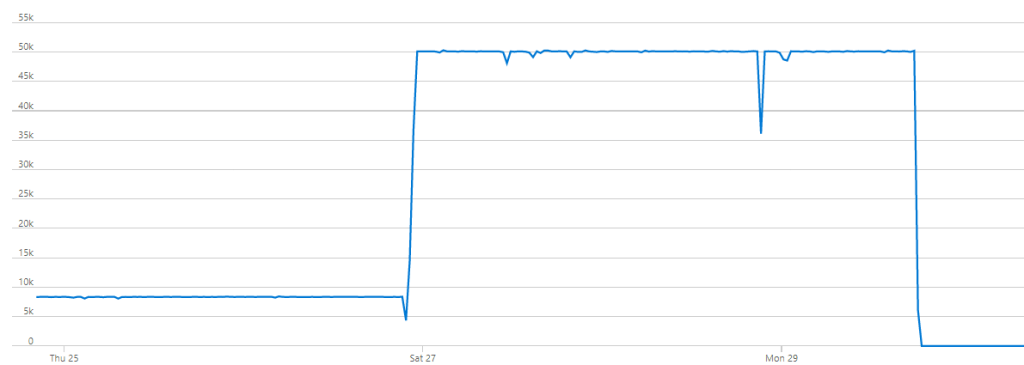

By default, Azure functions running on Consumption plan can scale out to maximum 200 instances. In documentations it is mentioned that function scaling depends on type of the trigger. This may result in a lot instances being run in parallel, out of which most instances’ CPU consumption is around just 0-1% (as shown in the image above). To get rid of those less used instances and to involve a fixed set of instances more, I turned on the setting “Enforce scale out limit” and set it maximum scale out limit to 5. This resulted in conservative scaling and much lesser blob transactions.

The dip in the image below is the point when I had enabled this setting and could see better results from that very instant.

Azure functions logging: Durable functions emit significant amount of logs, which if left unhandled, can result in high application insights data ingestion, resulting in higher cost. Refer to logging in Azure functions and durable functions to configure logging properly. In this project, I’ve just logged well-formatted exceptions to avoid unwanted dump of logs.

Azure Queues vs Service bus queues: Initially, I had used Azure Queues instead of service bus queues, and queue triggered functions were triggered by these Azure Queues (I don’t know why I made that choice, I have no justification for this). Azure Queues function trigger uses random exponential back off polling mechanism, which led to delays of a few seconds in queue message processing when orders were placed in random order.

Polling interval can be configured using maxPollingInterval property in host.json settings, but if the function is made to poll the queue very frequently, then it will impact storage account costs. That’s why I felt more comfortable in opting Service bus queues. It’ll also help in advanced queueing scenarios if required as the application grows and becomes more complicated.

Durable functions replays: In order to re-build the local state, orchestrator functions are replayed. For each replay, if not handled, log messages will be written. This will lead to duplication of log messages which isn’t helpful in anyway. Just add this piece of code which tells logger to filter out replayed logs:

log = context.CreateReplaySafeLogger(log);

CosmosDB: During testing I increased the number of orders by a factor of 6. That means that the total number of RU/s consumed should get increased by the same factor (as shown in the image below), and same applies to cost as well. This is what I liked about serverless tier – this predictability.

Conclusion

Serverless stack for a system is in my opinion definitely a good choice, and it seems to be the future now as it brings a lot of nice things to the table. Impressive performance can be obtained with lesser development efforts and costs. I feel like small or mid scale organizations can benefit a lot from this stack as it can bring down go to market time for their products without worrying about IT operations too much.

I wish my project is helpful to other developers to learn or get started with developing systems using serverless architecture. I’ll keep on updating the same project whenever I feel like there is scope of improvement or based on other folks’ suggestions. I want to extend it even further such that organizations or developers working on similar use case can use it as a template and build on top of it, hence I’ll keep on working on it whenever possible.

Source code: Food delivery system

Happy learning!

Update: I got an opportunity to talk about this topic in a session conducted by Pune Tech Community. Check out recording of the session below:

Leave a comment